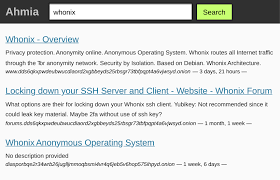

The Ahmia onion indexing system plays a unique role in how researchers discover onion services on the Tor network. Unlike broad dark web search tools that prioritize volume, Ahmia instead focuses on transparency, filtering, and research-safe indexing.

As a result, rather than attempting to map the entire dark web, Ahmia documents how indexing happens, what gets indexed, and why some services are excluded. This approach reflects the realities of onion services, where instability, abuse, and anonymity consistently limit traditional crawling.

Overall, this article explains how Ahmia indexes onion services, what makes its system different, and why researchers rely on it for structured visibility rather than convenience.

What Ahmia onion indexing system Is Designed to Do

Before examining the technical workflow, it helps to understand Ahmia’s purpose.

Unlike many dark web search engines, Ahmia is not designed to provide complete coverage. Instead, it functions as a research-oriented index that prioritizes legitimate, publicly reachable onion services.

For readers who need a broader foundation, see Dark Web Search Engines Explained

With that context in place, Ahmia’s design choices become easier to understand.

Defining the Ahmia Onion Indexing System

At its core, the Ahmia onion indexing system is built around controlled discovery rather than aggressive crawling.

Specifically, the system focuses on:

- Public onion services

- Manual and automated validation

- Abuse reporting and filtering

- Snapshot-based indexing

In contrast to surface-web engines, Ahmia does not rely on backlink graphs or continuous re-crawling. Instead, it maintains a curated index that evolves slowly and deliberately.

How Ahmia onion indexing system Discovers Onion Services

To begin with, Ahmia uses a combination of discovery methods rather than a single approach.

These include:

- Manual submissions from site operators

- Controlled Tor-based crawlers

- Community reporting mechanisms

Once discovered, each onion address undergoes validation before being indexed. Consequently, exposure to harmful or deceptive content is significantly reduced.

For a technical comparison, see How Onion Search Engines Index Dark Web Sites

Validation and Filtering Mechanisms

Validation is where Ahmia diverges most clearly from other search engines.

Before indexing, Ahmia evaluates whether an onion service:

- Is publicly accessible

- Does not host known abuse material

- Is not an obvious phishing clone

- Remains reachable over time

Because of this filtering process, index size remains limited. However, reliability improves significantly. In other words, Ahmia sacrifices breadth in favor of trust.

Abuse Reporting and Index Hygiene

Another defining feature of the Ahmia onion indexing system is its abuse reporting model.

In practice, users can report onion services that host:

- Scam content

- Malicious impersonations

- Policy-violating material

Once flagged, services may be reviewed and removed from the index. As a result, index decay is reduced and researcher exposure to fake mirrors declines.

For additional context, review Fake Onion Links: How Researchers Get Tricked

Snapshot Indexing Instead of Live Crawling

Rather than relying on real-time crawling, Ahmia primarily uses snapshot-based indexing.

In this model, the system captures onion services at specific intervals. This approach reflects the unstable nature of Tor hosting and frequent service turnover.

As a result, snapshot indexing allows researchers to:

- Observe historical presence

- Track service longevity

- Identify migration patterns

At the same time, it avoids placing unnecessary load on onion infrastructure.

Why Ahmia Avoids Broad Crawling

Broad crawling introduces several risks on the dark web.

For example, aggressive crawlers can:

- Trigger service shutdowns

- Violate operator expectations

- Increase exposure to harmful content

Therefore, by limiting crawl depth and frequency, Ahmia aligns with ethical research practices while still preserving access to legitimate services.

Comparing Ahmia to Other Dark Web Search Engines

When compared to broader indexing platforms, Ahmia’s approach stands out.

For instance:

- Torch emphasizes volume with minimal filtering

- Haystak focuses on keyword persistence and trend analysis

These differences are explored further in Ahmia Dark Web Search Guide

Thus, each tool serves a different research purpose rather than competing directly.

Role of Researchers and Analysts

Because of its structure, the Ahmia onion indexing system is widely used by:

- Academic researchers

- Journalists

- OSINT analysts

- Cybersecurity teams

However, professionals rarely rely on Ahmia alone. Instead, they combine it with monitoring strategies to improve accuracy.

For a layered approach, see Dark Web Monitoring Guide

Limitations of Ahmia’s Index

Despite its strengths, Ahmia has clear limitations.

These include:

- Partial coverage by design

- Exclusion of private or invite-only services

- Slower index updates

Nevertheless, these constraints are intentional. Rather than being shortcomings, they reflect ethical and technical boundaries.

Ethical Considerations in Onion Indexing

Indexing onion services carries ethical responsibility.

Consequently, Ahmia’s filtering policies reflect broader concerns about:

- Harm reduction

- Privacy protection

- Research accountability

For this reason, the Ahmia onion indexing system prioritizes transparency over expansion.

Infrastructure Constraints on Tor

All onion indexing systems operate within Tor’s technical limits.

Specifically, Tor introduces:

- High latency

- Circuit rotation

- Bandwidth constraints

Therefore, surface-web crawling models remain impractical in this environment.

For authoritative context, see Tor Project: Onion Services Explained

Authority Perspectives on Privacy and Research

From a privacy standpoint, the EFF highlights the importance of responsible search tools in anonymous environments

Meanwhile, from a threat-monitoring perspective, Europol discusses the challenges of visibility within dark web ecosystems

Together, these perspectives reinforce why Ahmia’s restrained design makes sense.

Current State of the Ahmia Onion Indexing System

Today, Ahmia represents one of the most transparent onion indexing platforms available.

Overall, its system is:

- Curated rather than comprehensive

- Research-oriented rather than consumer-focused

- Snapshot-based rather than real-time

As such, this balance reflects years of iteration and refinement.

FAQs: Ahmia and Onion Indexing

Does Ahmia index all onion services?

No. Instead, it indexes a filtered subset of publicly reachable services.

Is Ahmia safe for research use?

When used with Tor Browser and verification practices, yes.

Why do some services disappear from the index?

Because onion services often shut down, migrate, or violate policies.

Conclusion On Ahmia onion indexing system

In conclusion, the Ahmia onion indexing system demonstrates how dark web search tools adapt to an environment built to resist discovery. Rather than chasing completeness, Ahmia emphasizes validation, transparency, and ethical indexing.

Ultimately, for researchers, this approach provides structured visibility without undermining anonymity. Therefore, understanding how Ahmia indexes onion services is essential for interpreting results responsibly and avoiding false assumptions.